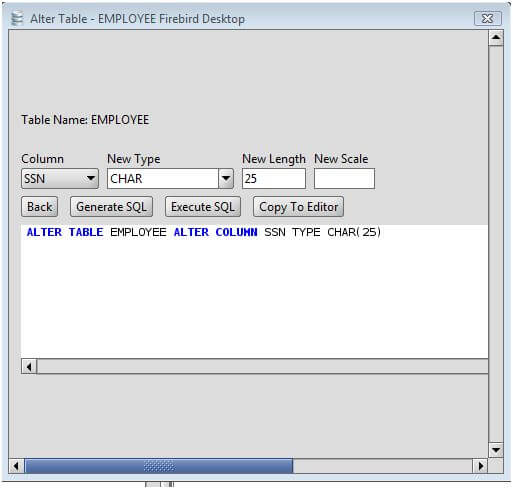

Extra efforts and thoughtful thinking earlier in your efforts will save you time in the future. Pro Tip: When you have operations running in a data warehouse, be caution of how you perform your data writing since it’s generally the most expensive operation. Nested data scenarios - These scenarios apply when Stitch loads. It has some flexibility for mismatches when using options such as “IGNOREEXTRA” or “FILLTARGET” when both tables are not conforming. Schema change scenarios - These scenarios apply when a table undergoes structural changes. Your source and destination table must have same columns and data types. 1.Alter table add newcolumn to the table 2.Update the newcolumn value with oldcolumn value 3.Alter table to drop the oldcolumn 4. This allows you to move the data much faster and more efficiently. The new “ALTER TABLE APPEND" function enables you to move records from source to target, instead of copying the records. As in most cases, copying large quantities of data can be time-consuming and cumbersome. But there are situations where the query can be quite sluggish, especially when the source table is too large. When you need to migrate data from one table to another, you can use the “ CREATE TABLE AS” statement. One of them is a new DDL, “ALTER TABLE APPEND”. I've already checked and the strings are all numbers so it should force fine. If this application were deployed to the namespace RS1, the staging area in S3 would be mys3bucket / RS1 / PosSource_TransformedStream_Type / mytable.In February, Amazon released some interesting features related Amazon Redshift. Redshift won't change column type Ask Question Asked 1 year, 2 months ago Modified 1 year, 2 months ago Viewed 2k times Part of AWS Collective 0 I'm trying to change a column in Redshift from varchar to integer. For example, changing the data type of a column that is part of the sharding key would lead to a redistribution of data. Secretaccesskey:'****************************************', ALTER TABLE products MODIFY COLUMN productid int, MODIFY COLUMN supplier VARCHAR (4) Note that changing data type has implications on the primary key and sharding enforcement. ĬREATE SOURCE PosSource USING FileReader (ĬREATE TARGET testRedshiftTarget USING RedshiftWriter(ĬonnectionURL: 'jdbc:redshift://.:5439/dev', This package, developed by dbt labs team, will allow us to CREATE external tables, REFRESH partitions, DROP and ALTER external tables within Amazon Redshift. The staging area in S3 will be created at the path / / /. See Replicating Oracle data to Amazon Redshift for an example. Use date/time data types for date columns. The RazorSQL alter table tool includes an Add Column option for adding columns to Sybase. Source.db1,target.db1 source.db2,target.db2 Keeping a single index in the table: Redshift Add Multiple. Note that SQL Server source table names must be specified in three parts when the source is Database Reader or Incremental Batch Reader ( database.schema.%,schema.%) but in two parts when the source is MS SQL Reader or MS Jet ( schema.%,schema.%).

Note that Oracle CDB/PDB source table names must be specified in two parts when the source is Database Reader or Incremental Batch reader ( schema.%,schema.%) but in three parts when the source is Oracle Reader or OJet (( database.schema.%,schema.%).

If the reader uses three-part names, you must use them here as well. You may use the % wildcard only for tables, not for schemas or databases. In this case, specify the names of both the source and target tables. When the input stream of the target is the output of a DatabaseReader, IncrementalBatchReader, or SQL CDC source (that is, when replicating data from one database to another), it can write to multiple tables. To learn more about the types of LOD expressions you can. The table(s) must exist in Redshift and the user specified in Username must have insert permission. The newly created LOD expression is added to the Data pane, under Measures.

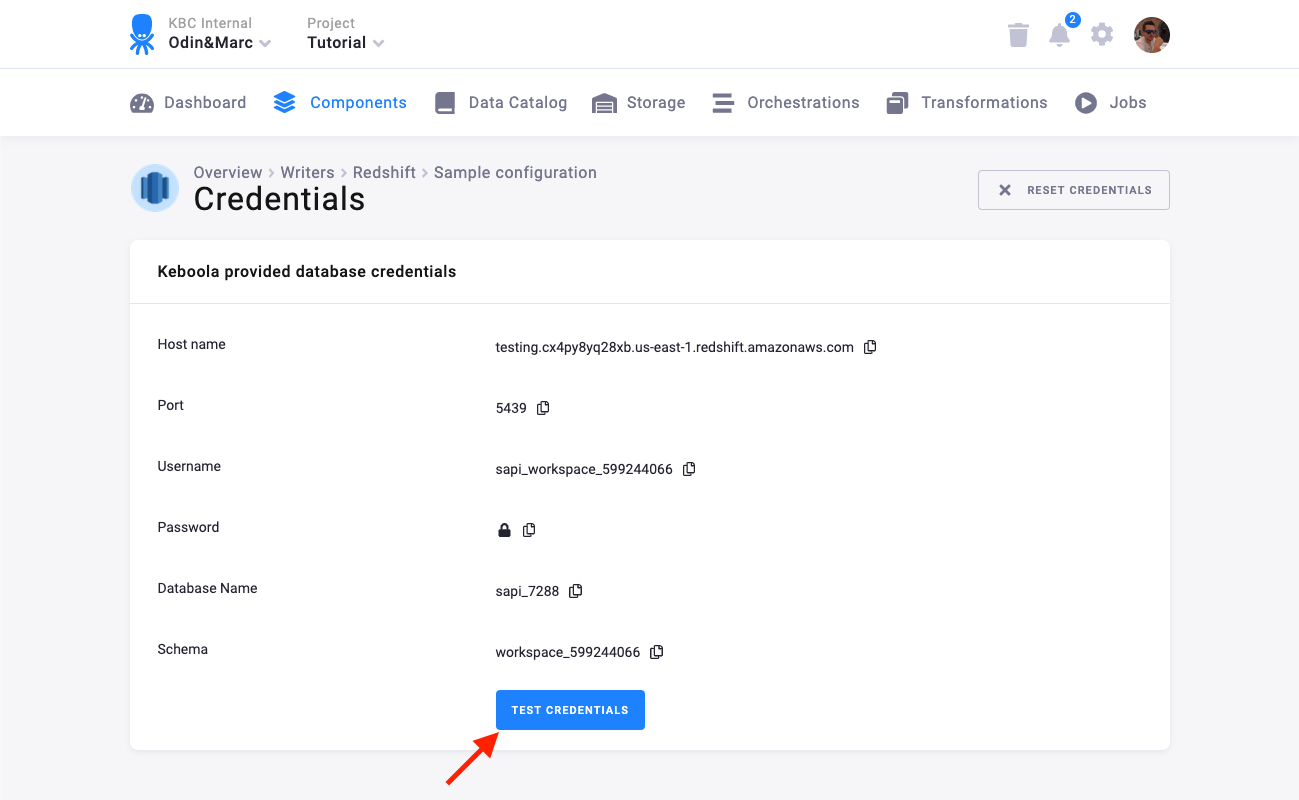

migrating your queries, change any occurrences of the Amazon Redshift CONVERT(type. The secret access key for the S3 staging area Provides a reference to compare statements, functions, data types. If the S3 staging area is in a different AWS region (not recommended), specify it here (see AWS Regions and Endpoints). If the data will contain ", change the default value to a sequence of characters that will not appear in the data.Īn AWS IAM role with read write permission on the bucket (leave blank if using an access key) The character(s) used to quote (escape) field values in the delimited text files in which the adapter accumulates batched data. See Replicating Oracle data to Amazon Redshift for more information. With an input stream of a user-defined type, do not change the default. For example, ConversionParams: 'IGNOREHEADER=2, NULL AS="NULL", ROUNDEC'

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed